|

This code loops through the points subtracting each point's Y coordinate from the coordinate of that line at the point's X position. It squares the error and adds it to the total. When it finishes its loop, the method returns the total of the squared errors.

As a mathematical equation, the error function E is:

![Sum[(yi - (m * xi + b)^2]](howto_linear_least_squares_eq1.png)

where the sum is performed over all of the points (xi, yi).

To find the least squares fit, you need to minimize this function E(m, b). That sounds intimidating until you remember that the xi and yi values are all known--they're the values you're trying to fit with the line.

The only variables in this equation are m and b so it's relatively easy to minimize this equation by using a little calculus. Simply take the partial derivatives of E with respect to m and b, set the two resulting equations equal to 0, and solve for m and b.

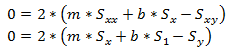

Taking the partial derivative with respect to m and rearranging a bit to gather common terms and pull constants out of the sums you get:

![2 * (m * Sum[xi^2] + b * Sum[xi] - Sum[xi * yi])](howto_linear_least_squares_eq2.png)

Taking the partial derivative with respect to b and rearranging a bit you get:

![2 * (m * Sum[xi] + b * Sum[1] - Sum[yi])](howto_linear_least_squares_eq3.png)

To find the minimum for the error function, you set these two equations equal to 0 and solve for m and b.

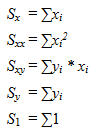

To make working with the equations easier, let:

If you make these substitutions and set the equations equal to 0 you get:

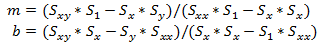

Solving for m and b gives:

Again these look like intimidating equations but all of the S's are values that you can calculate given the data points that you are trying to fit.

The following code calculates the S's and uses them to find the linear least squares fit for the points in a List(Of PointF).

|